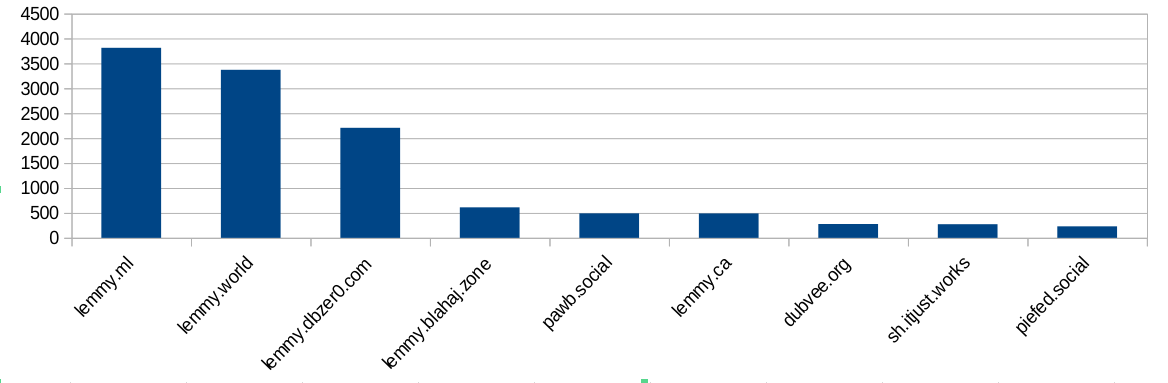

I did some analysis of the modlog and found this:

Ok, bigger instances ban more often. Not surprising, because they have more communities and more users and more trouble. But hang on, dbzer0 isn’t a very big instance. What happens if we do a ratio of bans vs number of users?

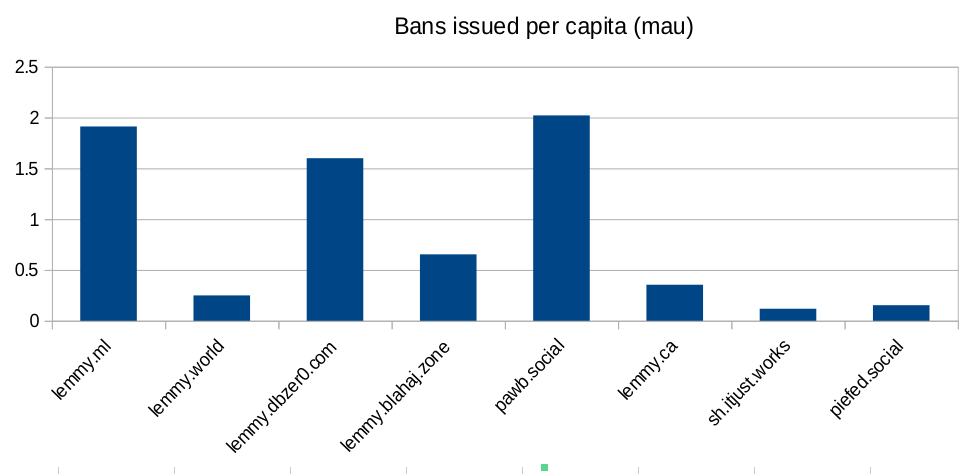

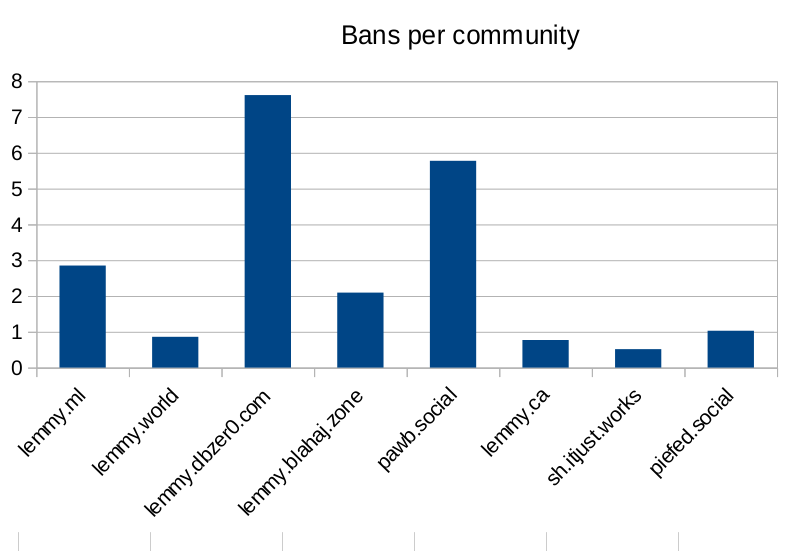

Ok, so lemmy.ml, dbzer0 and pawb are issue an outsized amount of bans for the number of users they have… But surely the number of communities the instance hosts is going to mean they have to ban more? Bans are used to moderate communities, not just to shield their user-base from the outside. Let’s look at the number of bans per community hosted:

Seems like dbzer0 really loves to ban. Even more than the marxists and the furries! What is it about dbzer0 that makes them such prolific banners?

Raw-ish numbers and calculations are in this spreadsheet if anyone wants to make their own charts.

This is a very ignorant comment. Consciousness is legitimately the greatest unsolved problem in modern science and philosophy.

Not really. It’s an emergent property of our biological processes. It’s not some nebulous thing like you and grail seem to think. Everything that lives is self aware and has some degree of consciousness. Without mimicking any of the biological processes and functions that living things have there can be no functional consciousness that’s close enough to our understanding of consciousness to be relevant. You both sound like high schoolers that got high for the first time and had their very first deep thoughts that weren’t actually deep, just really really stupid.

With all due respect, you simply do not know what you are talking about. Here is some info on this topic if you are all interested in learning more

Ok great. Now, explain specifically and with examples how exactly any of that is relevant to LLMs.

Whether or not LLMs are conscious depends on what theory of consciousness you are working on, and there is no universally accepted theory of consciousness. Every theory of consciousness is controversial, and most experts would probably agree that the correct theory has yet to be discovered. The point is that we simply don’t know enough about consciousness to confidently say which systems have it and which systems don’t.

So we can’t even prove consciousness is actually real in the first place. All we know is we have a subjective experience we rationalize through the concept of consciousness and given that this is the only experiential notion of it in the first place it makes no sense to ever apply it to an algorithm. We can have a separate philosophical discussion about whether humans are anything other than input->output machines with a bunch of fancy software that tricks is into thinking that we’re thinking, but as of yet there’s no reason to think any computer program in existence is anything other than a fancy calculator. Calculators aren’t conscious, or intelligent, or thinking, or capable of subjective experience. The entire position is based on a null hypothesis. I do believe a computer could eventually become conscious but not any computer humans are capable of building or programming any time soon if ever.

The theory you’re discussing is called eliminative materialism (or illusionism) and it’s detailed in the link I sent you, along with a host of other metaphysical theories. Like every other theory eliminative materialism has significant issues

Theories of mind don’t apply to computer programs.

https://en.wikipedia.org/wiki/Computational_theory_of_mind

It’s the astounding mix of complete ignorance and supreme arrogance that makes people like you so supremely loathsome

Oh fuck off grail’s alt.

“Surely multiple people couldn’t disagree with me, I’m the smartest and specialest boy!”