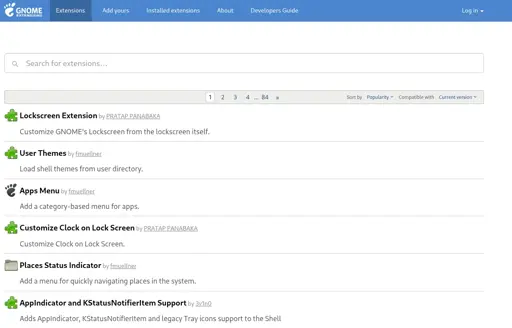

The GNOME.org Extensions hosting for GNOME Shell extensions will no longer accept new contributions with AI-generated code. A new rule has been added to their review guidelines to forbid AI-generated code.

Due to the growing number of GNOME Shell extensions looking to appear on extensions.gnome.org that were generated using AI, it’s now prohibited. The new rule in their guidelines note that AI-generated code will be explicitly rejected

deleted by creator

We do this all the time. I’m certified for a whole bunch of heavy machinery, if I were worse people would’ve died

And even then, I’ve nearly killed someone. I haven’t, but on a couple occasions I’ve come way too close

It’s good that I went through training. Sometimes, it’s better to restrict who is able to use powerful tools

deleted by creator

People have already died to AI. It’s cute when the AI tells you to put glue on your pizza or asks you to leave your wife, it’s not so cute when architects and doctors use it

Bad information can be deadly. And if you rely too hard on AI, your cognitive abilities drop. It’s a simple mental shortcut that works on almost everything

It’s only been like 18 months, and already it’s become very apparent a lot of people can’t be trusted with it. Blame and punish those people all you want, it’ll just keep happening. Humans love their mental shortcuts

Realistically, I think we should just make it illegal to have customer facing LLMs as a service. You want an AI? Set it up yourself. It’s not hard, but realizing it’s just a file on your computer would do a lot to demystify it

deleted by creator

Well I was just arguing that people generally are using AI irresponsibly, but if you want to get specific…

You say ban the users, but realistically how are they determining that? The only way to reliably check if something is AI is human intuition. There’s no tool to do that, it’s a real problem

So effectively, they made it an offense to submit AI slop. Because if you just use AI properly as a resource, no one would be able to tell

So what are you upset about?

They did basically what you suggested, they just did it by making a rule so that they can have a reason to reject slop without spending too much time justifying the rejection

deleted by creator

I don’t think you understand what doing code reviews is like.

So someone submits terrible code. You don’t get to just say “this is bad code” and reject it wholesale, you have to explain in exhaustive detail what the problems are. Doing otherwise leads to really toxic environments. It’s killed countless projects

That’s why you write rules. You don’t have to argue if they need tests or not, you tap the sign and reject it without actually reviewing it if it doesn’t meet the requirement

Same thing here. You open up vibe coded nonsense so you tap the sign and reject it without bothering to review it. Do the same thing with “bad code” as a reason and it starts insane drama.

People are really sensitive about their code, and there’s a whole methodology around how to do without ending up in a screaming match

They should state a justification. Not merely what they are looking for to identify AI generated code.

The justification could be the author is unlikely to be capable of maintenance. In which case the extension is just going to inconvenience/burden onto others.

So far their is no justification stated besides, da fuk and yuk.

deleted by creator

A saner organization would also hit up submitters for a reviewer’s fee. This would reduce AI spam. Barriers to entry matter.

A reviewers fee is equivalent to Canonical offering customer support contracts. Obviously a person that needs to lean on AI as a crutch, is just screaming out for reviewers to act as advisers. The reviewer just wielding the giant DENIED stamp is fun, but doesn’t address the issue of noobs implicitly asking to work with a consultant.

gnome reviewers obviously never missing an opportunity to miss an opportunity.

Have you read the first paragraph if the lidnked articel? It quotes the criteria right there: "Extensions must not be AI-generated

While it is not prohibited to use AI as a learning aid or a development tool (i.e. code completions), extension developers should be able to justify and explain the code they submit, within reason.

Submissions with large amounts of unnecessary code, inconsistent code style, imaginary API usage, comments serving as LLM prompts, or other indications of AI-generated output will be rejected."

Maybe instead of commenting under every comment that lines this change read the articlw first? Ai is fine if your code is fine and you uderstand it. If the reviewer has to argue with a llm because the submitter just pasts the text into his llm and then posts the output of said llm back to the reviwer it is a huge waste of time. Thiss doesnt happen if the person submitting the code understands it and made shure that the code is fine.

Everyone commenting have read and understood the article. Perhaps the nuance of the conversation is just going over your head. Your commentary is your personal opinion, which is outside of the source material. What you copy+pasted is exactly what we’ve commented on.

The article never said “reviewing Gnome extensions, where LLM was used, is a huge waste of time”. You are adding to what’s said. The adults are not pulling from outside the article.

We are stating what the article lacks. We are not hallucinating. So if we are not hallucinating then you must not be following. Reread it a few times until you get it.

Maybe read the original blog post from the gnome dev then? The post the article references… it says right there why the ai code is a problem, it has to much unnessecery code in it and reviewing that takes time. The author also says the submitted ai code doesnt adhere to good practices.